Why Beta Testing Is Critical in AI Tools Development

Artificial intelligence applications have reached a stage where they are not just experimental but rather the fundamental business systems that lead to making decisions, automating, and enhancing customer experience all over the industries. Nowadays, businesses rely on AI-powered tools such as chatbots and recommendation engines for precise and responsible massive operations, forecasting analytics, and intelligent automation.

Besides, the AI applications are totally different from the software that has been used before. They consume data, learn from it, adapt to the way users interact with them, and are even able to handle real-world inputs in a flexible manner. Such intricacy brings about new kinds of risks that cannot be overcome by just normal testing procedures.

From our team’s experience working closely with AI-driven products, one truth has become clear: Beta Testing is the most critical phase in AI tools development. It is where theory meets reality. It is where assumptions are challenged by real users, real data, and real environments.

Every AI-focused project we’ve worked on, across industries and use cases, has reinforced the same lesson: without structured beta testing, even the most advanced AI models remain incomplete. This blog reflects not only best practices but also the hands-on experience. discipline, and dedication our QA teams bring to validating AI tools before they reach production.

TL;DR

- Beta Testing validates AI tools in real-world environments.

- AI behaves differently with live users and real data.

- Beta Testing helps detect bias, accuracy gaps, and ethical risks.

- Real user feedback improves trust, usability, and reliability.

- Expert QA-led Beta Testing reduces failures and launch risks.

Key Takeaways

- Controlled testing environments cannot replicate live data, unpredictable inputs, and real user behavior.

- Beta Testing helps uncover ethical, compliance, and decision-making risks early.

- AI performance changes when exposed to evolving datasets and real workloads.

- Beta Testing highlights usability gaps and confidence issues.

- Structured processes and domain expertise are critical for AI readiness.

Understanding Beta Testing in AI Tools Development

Beta Testing is basically a period during which an AI tool is made available to a selected group of real users outside the internal development team. In contrast to alpha or internal testing, this testing stage allows the system to be exposed to real data, real users, and real usage conditions, which cannot be completely simulated in testing labs, thus placing the system in live environments.

With AI tools, this phase not only confirms the basic functionality but also validates a lot more. It assesses how the model reacts to the real situations, how the interpretation of AI outputs by the users and the interaction with them take place, and finally, how the system performs and scales when it is subjected to realistic workloads. Beta Testing plays the final role, guarding the AI tool to be stable, reliable, and fit to participate in the decision-making process of the real world.

For AI tools, this phase validates far more than basic functionality. It evaluates:

- Simulating reality in the model’s behavior: Beta Testing puts AI models to the test under noisy, incomplete, and unpredictable inputs in realistic operating situations and thus it is determined how they will behave.

- Decision consistency and reliability: Beta Testing detects if AI decisions are constant and predictable across alike conditions and varying data distributions.

- User interaction patterns: Methods of user-real Beta Testing disclose to what extent each user comprehends AI outputs, the points of misunderstanding, and the ways trust is built.

- System performance and scalability: Beta Testing determines the extent to which the AI system can effectively cope with the actual workload, concurrent usage, and production-level demand.

Beta Testing serves as the final checkpoint before an AI tool is trusted with real-world decisions and large-scale deployment.

Also Read: System Integration Testing: Types, Benefits & Best Practices

Why Beta Testing Is Especially Critical for AI Tools

AI tools are working in a fast-shifting and unpredictable scenario where the outcomes are determined by the actual users, actual data, and the real-world context. AI systems, unlike conventional software, are continuously interpreting inputs, making probabilistic decisions, and evolving during the course of their life. This is the very reason beta testing is regarded as highly important for the verification of AI tools before their large-scale use.

Beta testing is the link connecting the controlled environmental conditions of development and the live production environments. It puts AI systems under scenarios where not everything can be imitated during the internal testing, and it guarantees that they act responsibly, consistently, and reliably when real users are depending on them.

AI Models React Differently in Real-World Conditions

It is quite common to see AI models being trained and evaluated with either historical or carefully curated datasets. Although such a technique does aid in defining baseline accuracy, it is still incapable of accounting for the details of actual usage. Typically, the production environment data is dirty, incomplete, noisy, or inconsistent, and user behavior hardly ever follows the predicted patterns.

Beta Testing reveals how AI tools respond to real-world conditions, including:

- Unstructured or unexpected inputs

- New and evolving user behavior patterns

- Environment-specific data variations

Model performance could be greatly impacted by these factors. The real usage during beta testing discloses the limitations that cannot be completely simulated in orderly testing environments, thus permitting the teams to polish the models before the wider release.

Detecting Bias and Ethical Risks Early

The existence of bias continues to be one of the most significant and controversial dangers in AI technologies. Such models, even if they are excellently designed, may still lead to biased or unjust results owing to the constraints of the training data, labeling methods, or the influence of history in the imbalances.

Through Beta Testing, teams can analyze AI outputs across diverse users, contexts, and scenarios to identify:

- Unequal prediction accuracy across groups

- Demographic or contextual bias

- Ethical, legal, and compliance-related concerns

Detecting and fixing these problems at the very beginning of the Beta Testing phase goes a long way in not only helping the companies to build responsible AI systems but also making sure the users, the businesses, and the brands’ reputations are safe.

Validating Accuracy, Stability, and Decision Quality

The nature of AI accuracies is probabilistic, which means that the outcomes can change according to different factors such as input, context, or data distribution. Thus, accuracy was not the only measure to consider; consistency and stability were also very significant.

Beta Testing allows teams to evaluate AI performance under real workloads by measuring:

- Error rates during live usage

- Consistency of predictions across similar scenarios

- Stability of outputs over time

This validation ensures AI tools meet acceptable performance thresholds and remain dependable in production environments where decisions may have real consequences.

Improving User Experience and Trust

Even highly accurate AI tools can fail if users do not trust or understand them. User perception plays a critical role in the adoption and success of AI-driven products.

During Beta Testing, real users provide insight into:

- How AI-generated outputs are interpreted

- Whether results feel reliable and explainable

- Friction points in AI-driven workflows

This input assists groups in perfecting design elements, communication, openness, and the ways of explaining things. By resolving problems regarding the ease of use and trust right at the start, Beta Testing makes certain that AI applications are smart, usable, and trustworthy.

Also Read : Top Software Testing Companies

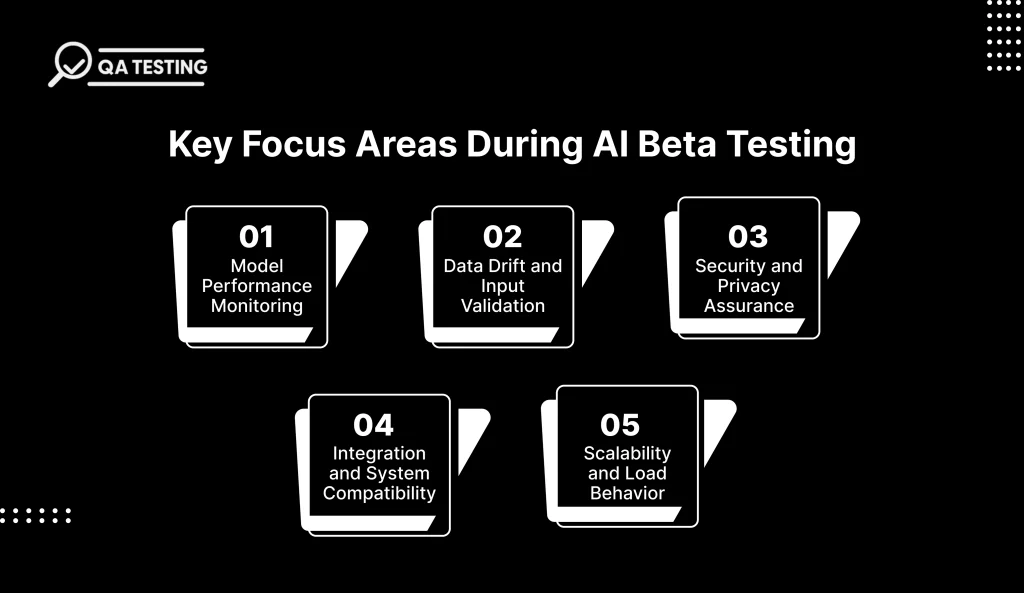

Key Focus Areas During AI Beta Testing

For AI tools to be production-ready, Beta Testing must go beyond surface-level validation. It should focus on critical technical, operational, and user-centric areas that directly impact reliability, security, and scalability in real-world environments.

Model Performance Monitoring

The ongoing monitoring of model performance during AI Beta testing is vital for gaining insights into the system’s performance under the real-world scenario. The use of the system in the real world usually brings in variances that have an impact on accuracy and quality of response. Beta testing assists the groups in spotting the drops in performance, the generation of unexpected outputs, and the early indications of model drift that can be adjusted prior to the complete launch.

Data Drift and Input Validation

Real-world data is an ongoing process, and AI models need to be updated with such changes to still be effective. The beta testing shows the changes in data distribution, the changes in input quality, and the changes that the model might not have been aware of when it was trained. Locating the data shift by means of beta testing makes it possible to carry out retraining and input validation in time and thus be able to guarantee the model’s stability for longer periods.

Security and Privacy Assurance

AI tools frequently handle sensitive data, making security and privacy a major concern. Beta Testing confirms the manner in which data is processed, kept, and accessed under normal usage conditions. Security holes, wrong access restrictions, and compliance shortcomings are brought to light in this stage, which subsequently reduces the risk of data infiltration and regulatory problems post-launch through the early identification of such risks.

Integration and System Compatibility

AI tools do not usually function alone and are required to be perfectly merged with the current systems, APIs, and workflows. Beta Testing confirms that there are no issues with the data transfer between the integrated platforms and the AI tool performs uniformly in various environments. This validation prevents integration failures and operational disruptions in production.

Scalability and Load Behavior

As AI tools move toward full deployment, they must handle growing user demand without performance degradation. Beta Testing with real users reveals how the system responds to increased load, peak usage, and concurrent requests. Evaluating scalability during Beta Testing allows teams to address infrastructure limitations and ensure stable performance as adoption increases.

Also Check: User Acceptance Testing (UAT): Types, Benefits & Best Practices

Effective Beta Testing Strategies for AI Tools

Beta testing that is done well can guarantee that AI tools won’t let down users in actual usage scenarios. A well-planned method allows groups not only to obtain precision but also to find bias, increase user trust, and get AI systems ready for large-scale production through fewer risks and failures in the case of post-launch.

- Use diverse beta user groups: Diverse beta users expose AI tools to varied behaviors, inputs, and contexts, helping Beta Testing uncover edge cases, bias risks, and performance gaps missed in controlled environments.

- Combine quantitative metrics with qualitative feedback: Beta Testing should balance performance metrics like accuracy and latency with user feedback to understand both the technical behavior and real-world usability of AI tools.

- Continuously monitor AI behavior: Ongoing monitoring during Beta Testing helps identify accuracy drops, anomalies, and data drift early, ensuring AI models remain stable and reliable throughout the testing phase.

- Include human-in-the-loop validation: Human oversight during Beta Testing allows reviewers to evaluate AI decisions, flag incorrect outputs, and provide contextual judgment where automated validation is insufficient.

- Partner with AI-experienced QA teams: QA teams with AI expertise apply specialized Beta Testing methods to validate model behavior, fairness, scalability, and real-world readiness more effectively.

Our team’s practical experience shows that the aforementioned strategies guarantee Beta Testing’s outcome as useful insights and not just surface validation. A rigorous method turns Beta Testing into a strategic advantage rather than a release formality.

Role of Beta Testing in AI Development

The AI development process sees the Beta Testing phase as the way to validate the performance of the system in real-world conditions where data, users, and surroundings are not predictable. AI systems are different from traditional software in that they use probabilistic models and adaptive learning, thus making it imperative to conduct production-like validation.

AI tools during Beta Testing are in communication with real users and live data, which gives the developers the opportunity to check the accuracy, stability, and the consistency of the decision-making process. This part of the process helps to prove that the models have become reliable even under the uncontrolled testing environments.

- Validates AI performance under real data variability: Beta Testing exposes AI models to noisy, incomplete, and unpredictable real-world data to confirm stable and accurate behavior.

- Detects data and concept drift early: Continuous Beta Testing identifies shifts in data patterns and model understanding that can reduce accuracy over time.

- Assesses decision stability and confidence levels: Beta Testing evaluates whether AI outputs remain consistent and trustworthy across similar inputs and changing conditions.

- Identifies bias and fairness risks: Real-user Beta Testing helps uncover unequal outcomes or demographic bias before large-scale deployment.

- Confirms production readiness and scalability: Beta Testing verifies system performance, infrastructure stability, and reliability under increasing user load.

Why Beta Testing Is Critical for Reliable AI Development?

Beta testing plays a very important role and works as a filter to check AI instruments’ performance in an unpredictable real-world scenario with unpredictable data, users, and environments. It ensures that the AI systems will deliver consistent, accurate, and responsible outcomes even outside the controlled settings of the development process.

- Beta testing validates real-world AI behavior: Exposure of AI models to live data and users through beta testing confirms the stable performance of AI models under unpredictable inputs and usage patterns.

- Measures reliability under real workloads: Testing in production-like conditions facilitates assessing error rates, latency, and consistency of decisions at scale.

- Detects data and concept drift early: Continuous beta testing becomes a means of identifying changes in data patterns that may cause the decrease of model accuracy over time.

- Improves decision thresholds and safeguards: We learn very useful insights out of the so-called in support of refining confidence levels, fallback mechanisms, and precontrol activities.

- Enhances long-term AI trustworthiness: Beta testing doesn’t merely check the performance in reality but also assures the reliability, power of resistance, and trustworthiness of AI systems in production to last through using them.

Conclusion

The process of beta testing has a very significant impact on the ability of AI tools to work well in real-world situations where data, users, and conditions are always changing. It tests and confirms the precision, robustness, and ethical behavior and, at the same time, issues warnings about the presence of risks such as data drift, bias, and erratic decision-making, which are not necessarily internal testing alone. If beta testing is not properly conducted, AI systems will be exposed to the risk of being unreliable post-deployment.

To impact AI Beta Testing positively, companies must have not only specialized knowledge but also proven quality assurance methods. By collaborating with a professional QA Testing resource, one gets access to AI-focused testing frameworks, a skilled QA team, and an always-on monitoring practice. As a result, the organization can be confident that its AI tools are not only ready for production but also able to scale up and will be trusted by users from the very beginning.

FAQs

1. What is Beta Testing in AI tools development?

Beta Testing is the stage during which a tool based on AI is subjected to the scrutiny of actual users in actual settings to confirm precision, conduct, friendliness, and dependability prior to rollout to all users.

2. How is Beta Testing different for AI compared to traditional software?

In the case of traditional software, the AI systems do not change and do not learn at all. On the other hand, Beta Testing is the stage where the quality of the decisions made and the presence of bias, data drift, and ability to perform in the real world are all validated, not just the passing of fixed outputs.

3. Why can’t AI tools rely only on internal testing?

Internal testing alone cannot completely duplicate the unpredictable behavior of the users, the variability of the data used, or the real operational environments, which are all critical to the validation of AI.

4. What risks does Beta Testing help reduce?

Beta Testing eliminates the danger of producing biased results, making incorrect forecasts, losing user trust, exposing security weaknesses, and incurring heavy financial losses caused by post-launch problems, among others.

5. Should Beta Testing be handled by specialized QA teams?

Definitely, QA teams with experience in model validation, data behavior analysis, and AI-specific testing strategies are a must in order to get the AI tools ready for production.